These are my very rough notes from day 3 of the inaugural Australasian Association for Digital Humanities conference (see also Quick and dirty Digital Humanities Australasia notes: day 1 and Quick and dirty Digital Humanities Australasia notes: day 2) held in Canberra’s Australian National University at the end of March.

We were welcomed to Day 3 by the ANU’s Professor Marnie Hughes-Warrington (who expressed her gratitude for the methodological and social impact of digital humanities work) and Dr Katherine Bode. The keynote was Dr Julia Flanders on ‘Rethinking Collections’, AKA ‘in praise of collections’… [See also Axel Brun’s live blog.]

She started by asking what we mean by a ‘collection’? What’s the utility of the term? What’s the cultural significance of collections? The term speaks of agency, motive, and implies the existence of a collector who creates order through selectivity. Sites like eBay, Flickr, Pinterest are responding to weirdly deep-seated desire to reassert the ways in which things belong together. The term ‘collection’ implies that a certain kind of completeness may be achieved. Each item is important in itself and also in relation to other items in the collection.

There’s a suite of expected activities and interactions in the genre of digital collections, projects, etc. They’re deliberate aggregations of materials that bear, demand individual scrutiny. Attention is given to the value of scale (and distant reading) which reinforces the aggregate approach…

She discussed the value of deliberate scope, deliberate shaping of collections, not craving ‘everythingness’. There might also be algorithmically gathered collections…

She discussed collections she has to do with – TAPAS, DHQ, Women Writers Online – all using flavours of TEI, the same publishing logic, component stack, providing the same functionality in the service of the same kinds of activities, though they work with different materials for different purposes.

What constitutes a collection? How are curated collections different to user-generated content or just-in-time collections? Back ‘then’, collections were things you wanted in your house or wanted to see in the same visit. What does the ‘now’ of collections look like? Decentralisation in collections ‘now’… technical requirements are part of the intellectual landscape, part of larger activities of editing and design. A crucial characteristic of collections is variety of philosophical urgency they respond to.

The electronic operates under the sign of limitless storage… potentially boundless inclusiveness. Design logic is a craving for elucidation, more context, the ability for the reader to follow any line of thought they might be having and follow it to the end. Unlimited informational desire, closing in of intellectual constraints. How do boundedness and internal cohesion help define the purpose of a collection? Deliberate attempt at genre not limited by technical limitations. Boundedness helps define and reflect philosophical purpose.

What do we model when we design and build digital collections? We’re modelling the agency through which the collection comes into being and is sustained through usage. Design is a collection of representational practices, item selection, item boundaries and contents. There’s a homogeneity in the structure, the markup applied to items. Item-to-item interconnections – there’s the collection-level ‘explicit phenomena’ – the directly comparable metadata through which we establish cross-sectional views through the collection (eg by Dublin Core fields) which reveal things we already know about texts – authorship of an item, etc. There’s also collection-level ‘implicit phenomena’ – informational commonalities, patterns that emerge or are revealed through inspection; change shape imperceptibly through how data is modelled or through software used [not sure I got that down right]; they’re always motivated so always have a close connection with method.

Readerly knowledge – what can the collection assume about what the reader knows? A table of contents is only useful if you can recognise the thing you want to find in it – they’re not always self-evident. How does the collection’s modelling affect us as readers? Consider the effects of choices on the intellectual ecology of the collection, including its readers. Readerly knowledge has everything to do with what we think we’re doing in digital humanities research.

The Hermeneutics of Screwing Around (pdf). Searching produces a dynamically located just-in-time collection… Search is an annoying guessing game with a passive-aggressive collection. But we prefer to ask a collection to show its hand in a useful way (i. e. browse)… Search -> browse -> explore.

What’s the cultural significance of collections? She referenced Liu’s Sidney’s Technology… A network as flow of information via connection, perpetually ongoing contextualisation; a patchwork is understood as an assemblage, it implies a suturing together of things previously unrelated. A patchwork asserts connections by brute force. A network assumes that connections are there to be discovered, connected to. Patchwork, mosaic – connects pre-existing nodes that are acknowledged to be incommensurable.

We avow the desirability of the network, yet we’re aware of the itch of edge cases, data that can’t be brought under rule. What do we treat as noise and what as signal, what do we deny is the meaning of the collection? Is exceptionality or conformance to type the most significant case? On twitter, @aylewis summarised this as ‘Patchworking metaphor lets us conceptualise non-conformance as signal not noise’

Pay attention to the friction in the system, rather than smoothing it over. Collections both express and support analysis. Expressing theories of genre etc in internal modelling… Patchwork – the collection articulates the scholarly interest that animated its creation but also interests of the reader… The collection is animated by agency, is modelled by it, even while it respects the agency we bring as readers. Scholarly enquiry is always a transaction involving agency on both ends.

My (not very good) notes from discussion afterwards… there was a question about digital femmage; discussion of the tension between the desire for transparency and the desire to permit many viewpoints on material while not disingenuously disavowing the roles in shaping the collection; the trend at one point for factoids rather than narratives (but people wanted the editors’ view as a foundation for what they do with that material); the logic of the network – a collection as a set of parameters not as a set of items; Alan Liu’s encouragement to continue with theme of human agency in understanding what collections are about (e.g. solo collectors like John Soane); crowdsourced work is important in itself regardless of whether it comes up with the ‘best’ outcome, by whatever metric. Flanders: ‘the commitment to efficiency is worrisome to me, it puts product over people in our scale of moral assessment’ [hoorah! IMO, engagement is as important as data in cultural heritage]; a question about the agency of objects, with the answer that digital surrogates are carriers of agency, the question is how to understand that in relation to object agency?

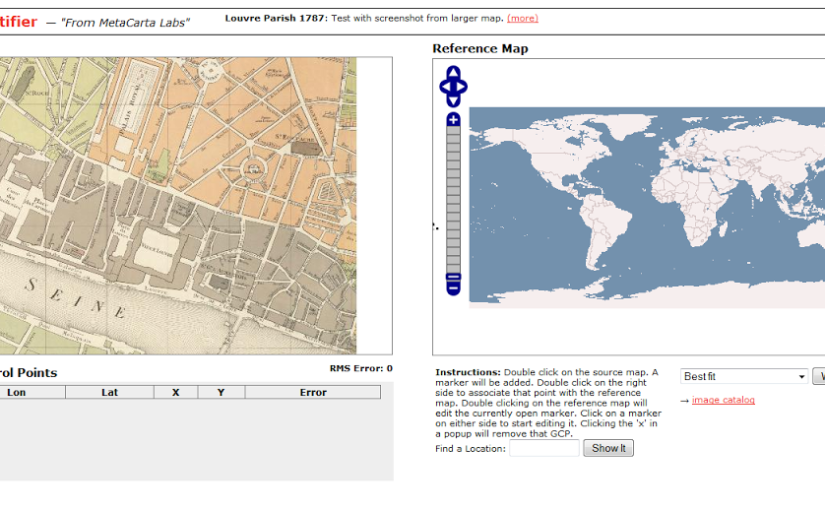

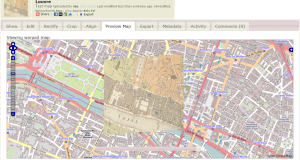

GIS and Mapping I

The first paper was ‘Mapping the Past in the Present’ by Andrew Wilson, which was a fast run-through some lovely examples based on Sydney’s geo-spatial history. He discussed the spatial turn in history, and the mid-20thC shift to broader scales, territories of shared experience, the on-going concern with the description of space, its experience and management.

He referenced Deconstructing the map, Harley, 1989, ‘cartography is seldom what the cartographers say it is’. All maps are lies. All maps have to be read, closely or distantly. He referenced Grace Karskens’ On the rocks and discussed the reality of maps as evidence, an expression of European expansion; the creation of the maps is an exercise in power. Maps must be interpreted as evidence. He talked about deriving data from historic maps, using regressive analysis to go back in time through the sources. He also mentioned TGIS – time-enabled GIS. Space-time composite model – when have lots and lots of temporal changes, create polygon that describes every change in the sequence.

The second paper was ‘Reading the Text, Walking the Terrain, Following the Map: Do We See the Same Landscape?’ by Øyvind Eide. He said that viewing a document and seeing a landscape are often represented as similar activities… but seeing a landscape means moving around in it, being an active participant. Wood (2010) on the explosion of maps around 1500 – part of the development of the modern state. We look at older maps through modern eyes – maps weren’t made for navigation but to establish the modern state.

He’s done a case study on text v maps in Scandinavia, 1740s. What is lost in the process of converting text to maps? Context, vagueness, under-specification, negation, disjunction… It’s a combination of too little and too much. Text has information that can’t fit on a map and text that doesn’t provide enough information to make a map. Under-specification is when a verbal text describes a spatial phenomenon in a way that can be understood in two different ways by a competent reader. How do you map a negative feature of a landscape? i.e. things that are stated not to be there. ‘Or’ cannot be expressed on a map… Different media, different experiences – each can mediate only certain aspects for total reality (Ellestrom 2010).

The third paper was ‘Putting Harlem on the Map’ by Stephen Robertson. This article on ‘Writing History in the Digital Age’ is probably a good reference point: Putting Harlem on the Map, the site is at Digital Harlem. The project sources were police files, newspapers, organisational archives… They were cultural historians, focussed on individual level data, events, what it was like to live in Harlem. It was one of first sites to employ geo-spatial web rather than GIS software. Information was extracted and summarised from primary sources, [but] it wasn’t a digitisation project. They presented their own maps and analysis apart from the site to keep it clear for other people to do their work. After assigning a geo-location it is then possible to compare it with other phenomena from the same space. They used sources that historians typically treat as ephemera such as society or sports pages as well as the news in newspapers.

He showed a great list of event types they’ve gotten from the data… Legal categories disaggregate crime so it appears more often in the list though was the minority of data. Location types also offers a picture of the community.

Creating visualisations of life in the neighbourhood…. when mapping at this detailed scale they were confronted with how vague most historical sources are and how they’re related to other places. ‘Historians are satisfied in most cases to say that a place is ‘somewhere in Harlem’.’ He talked about visualisations as ‘asking, but not explaining, why there?’.

I tweeted that I’d gotten a lot more from his demonstration of the site than I had from looking at it unaided in the past, which lead to a discussion with @claudinec and @wragge about whether the ‘search vs browse’ accessibility issue applies to geospatial interfaces as well as text or images (i.e. what do you need to provide on the first screen to help people get into your data project) and about the need for as many hooks into interfaces as possible, including narratives as interfaces.

Crowdsourcing was raised during the questions at the end of the session, but I’ve forgotten who I was quoting when I tweeted, ‘by marginalising crowdsourcing you’re marginalising voices’, on the other hand, ‘memories are complicated’. I added my own point of view, ‘I think of crowdsourcing as open source history, sometimes that’s living memory, sometimes it’s research or digitisation’. If anything, the conference confirmed my view that crowdsourcing in cultural heritage generally involves participating in the same processes as GLAM staff and humanists, and that it shouldn’t be exploitative or rely on user experience tricks to get participants (though having made crowdsourcing games for museums, I obviously don’t have a problem with making the process easier to participate in).

The final paper I saw was Paul Vetch, ‘Beyond the Lowest Common Denominator: Designing Effective Digital Resources’. He discussed the design tensions between: users, audiences (and ‘production values’); ubiquity and trends; experimentation (and failure); sustainability (and ‘the deliverable’),

In the past digital humanities has compartmentalised groups of users in a way that’s convenient but not necessarily valid. But funding pressure to serve wider audiences means anticipating lots of different needs. He said people make value judgements about the quality of a resource according to how it looks.

Ubiquity and trends: understanding what users already use; designing for intuition. Established heuristics for web design turn out to be completely at odds with how users behave.

Funding bodies expect deliverables, this conditions the way they design. It’s difficult to combine: experimentation and high production values [something I’ve posted on before, but as Vetch said, people make value judgements about the quality of a resource according to how it looks so some polish is needed]; experimentation and sustainability…

Who are you designing for? Not the academic you’re collaborating with, and it’s not to create something that you as a developer would use. They’re moving away from user testing at the end of a project to doing it during the project. [Hoorah!]

Ubiquity and trends – challenges include a very highly mediated environment; highly volatile and experimental… Trying to use established user conventions becomes stifling. (He called useit.com ‘old nonsense’!) The ludic and experiential are increasingly important elements in how we present our research back.

Mapping Medieval Chester took technology designed for delivering contextual ads and used it to deliver information in context without changing perspective (i.e. without reloading the page, from memory). The Gough map was an experiment in delivering a large image but also in making people smile. Experimentation and failure… Online Chopin Variorum Edition was an experiment. How is the ‘work’ concept challenged by the Chopin sources? Technical methodological/objectives: superimposition; juxtaposition; collation/interpolation…

He discussed coping strategies for the Digital Humanities: accept and embrace the ephemerality of web-based interfaces; focus on process and experience – the underlying content is persistent even if the interfaces don’t last. I think this was a comment from the audience: ‘if a digital resource doesn’t last then it breaks the principle of citation – where does that leave scholarship?’

Summary

This was my first proper big Digital Humanities conference, and I had a great time. It probably helped that I’m an Australian expat so I knew a sprinkling of people and had a sense of where various institutions fitted in, but the crowd was also generally approachable and friendly.

I was also struck by the repetition of phrases like ‘the digital deluge’, the ‘tsunami of data’ – I had the feeling there’s a barely managed anxiety about coping with all this data. And if that’s how people at a digital humanities conference felt, how must less-digital humanists feel?

I was pleasantly surprised by how much digital history content there was, and even more pleasantly surprised by how many GLAMy people were there, and consequently how much the experience and role of museums, libraries and archives was reflected in the conversations. This might not have been as obvious if you weren’t on twitter – there was a bigger disconnect between the back channel and conversations in the room than I’m used to at museum conferences.

As I mentioned in my day 1 and day 2 posts, I was struck by the statement that ‘history is on a different evolutionary branch of digital humanities to literary studies’, partly because even though I started my PhD just over a year ago, I’ve felt the title will be outdated within a few years of graduation. I can see myself being more comfortable describing my work as ‘digital history’ in future.

I have to finish by thanking all the speakers, the programme committee, and in particular, Dr Paul Arthur and Dr Katherine Bode, the organisers and the aaDH committee – the whole event went so smoothly you’d never know it was the first one!

And just because I loved this quote, one final tweet from @mikejonesmelb: Sir Ken Robinson: ‘Technology is not technology if it was invented before you were born’.